|

Photo credit: How-To Geek

All we hear about AI these days is how it has the potential to be used in ground-breaking technology and will drastically change various aspects in an average person’s life. However, AI is already making a dent in the everyday aspects of human life. For instance, a line of Google Nest products are applying AI in everyday items. CNET gives examples of the Nest Hello smart doorbell, the Nest Secure alarm system, the Nest Yale Lock and the Nest Cam IQ Outdoor security camera. The alarm system and security camera both use AI to help with facial recognition along with motion sensing. All of these applications of AI are helping people make their homes “smarter.” Another Google Nest item is the Nest Learning Thermostat. The NEST website explains that the thermostat uses AI to “learn what temperature you like and build a schedule around yours.” It recognizes daily patterns and begins to learn a routine, which helps with saving energy as well as convenience. AI has the potential to change life in many little ways, as well as leading technology into great breakthroughs. Sources: CNET NEST CNBC

4 Comments

Photo Credit: Business Insider

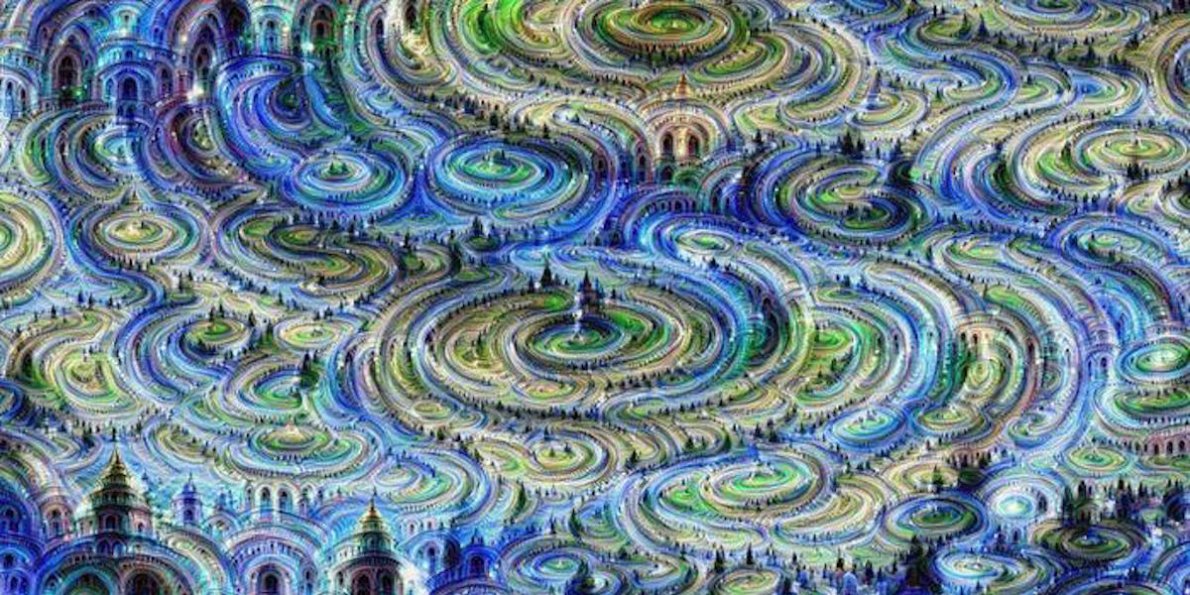

DeepArt.io is a website that uses deep neural networks to identify and combine stylistic elements of two separate images, a technique known as style transfer. But it’s not traditional “artificial intelligence”: no coding experience is required. The program relies on a neural algorithm, developed by Leon Gatys and colleagues at the University of Tübingen in 2015. This has been used in photo filters on Facebook and Prisma, as well as on moving image. Kristen Stewart used style transfer in her directorial short film debut Come Swim to redraw a brief dream sequence. In recent years, these kinds of programs have proliferated, using different techniques to create AI-assisted works which are both sophisticated and beautiful. In fact, a study says that AI-generated art now looks more convincingly human than work at Art Basel. For good or bad, the consequences could transform mainstream art production, consumption, and artists. First, a little history. The earliest known generative computergraphik, created around 1960 by Georg Nees, the German “father of computer art”, consisted mainly of black-and-white drawings of shapes. The first computer-generated music piece, Lejaren Hiller and Leonard Isaacson’s Illiac Suite for String Quartet, came in 1957. Both experiments were aimed at academic audiences, and not very “artistic.” We’ve come a long way since then. Deepjazz, created by Princeton University Ph.D. student Ji-Sung Kim, used neural networks to detect jazz musical patterns and generate new songs. Nvidia recently published a paper documenting how researchers, with incredibly convincing results, generate life-like images. The algorithm takes images of a winter street and predicts what it would look like during summer. Gene Kogan, a generative artist and author of Machine Learning for Artists has used similar methods to make realistic place images. Cornell University and Adobe researchers have also been working on a sophisticated version of style transfer for photos. The process they’re developing can even use the sunset lighting of one photo and apply it to a daytime photo of another location. Google, too, has been working on “supercharging style transfer.” Researchers developed a way to combine multiple styles, mixing them like paints. These may change how we value artists, too. We’re likely to see a proliferation of algorithmic art in mainstream culture. These tools will take some of the burden off of artists, but may lead to fewer job opportunities in the digital economy. Taobao, a Chinese shopping website, created banner ads for its mega-shopping Singles’ Holiday by training algorithms on design patterns of successful ads Airbnb also showed off a tool which uses algorithmic art techniques to convert sketches into fully designed and functional prototypes. The vibrant world of artistic potential that’s opened up by algorithms will be darkened by the potential for artists to lose control. Sources: Slate Artnet Futurism Photo credit: Pixabay It hasn't been two years since AlphaGo beat world champion Lee Sedol in Go, but Google's DeepMind already launched a new AI program to take its place.

On December of 2017, Alpha Zero single handedly defeated a world class chess engine, Stockfish, in only 4 hours. In fact, it had no previous experience with chess besides learning the basic rules, but the results were incredible: the AI went undefeated, winning 28 games and drawing the rest in an 100 game matchup. After this match, it went on to beat its former self AlphaGo in Go as well as Elmo in shogi. With this breakthrough, experts were able to discover more about the thought process of a machine. According to Demis Hassabis, the AI "doesn't play like a human, and it doesn't play like a program . . . It plays in a third, almost alien, way." As he analyzed the games of Alpha Zero, he noticed it played some outlandish yet positionally profound moves. Hassabis offers an explanation for this strange behavior. Rather than reinforcement learning (letting the AI learn from example games), Alpha Zero was taught solely by playing games against itself without any human input. DeepMind also says it takes on an "arguably more human-like approach", one that involves more evaluation and planning instead of calculating lengthy variations. Ever since 1997 when DeepBlue beat the world chess champion Gary Kasparov, computers have revolutionized the game of chess. Now powerful forms of machine learning like AlphaGo are making a drastic impact in the field of board games. Surely enough, it keeps us wondering: who will defeat Alpha Zero? Google Code-in and the Google Code-in logo are trademarks of Google Inc.

Are you interested in working on projects with open-source organizations along with peers from around the world? Getting first-hand experience in the world of project development? Interested in coding, quality control, documentation, or outreach? Just getting into the world of programming? The Google Code-In, which opened for registration on November 28th and will run until January 17th, is an annual event allowing participants to do just that. Pre-university students of all skill levels, ages 13-17, are invited to participate. According to their webpage, over 4500 students from 99 countries have completed work in the contest since 2010. Google partners with a number of open-source organizations (this year’s bunch includes Ubuntu and JBoss), giving participants the opportunity to claim tasks and work with mentors to complete assignments. Assignments range from developing new code for an application or webpage, to installing software and documenting the process, to designing company laptop stickers and t-shirts. The Google Code-In has tasks for everyone and is a great way to be introduced to the world of programming. Additionally, participants get to win prizes ranging from t-shirts to a trip to Google HQ! If you’re interested, we encourage you to sign up at https://codein.withgoogle.com/. Photo Credit: Gigaom

AI has been making great advances in social media. On November 27th, Guy Rosen, VP of Facebook Product Management, announced in a blog post that Facebook is using AI to help identify suicidal users and connect them to help. This tool has been in use in the US for months and will now be implemented in other countries as well. Rosen wrote that in the last month alone, Facebook ‘worked with first responders on over 100 wellness checks based on reports’ thanks to this technology. This tech uses AI for pattern recognition to ‘help accelerate the most concerning reports’ and inform local authorities, writes Rosen. Pattern recognition helps Facebook flag posts and live streams through which users may be expressing suicidal thoughts. It also searches for comments like, ‘Are you ok?’ and ‘Can I help?’ which can be strong indicators of someone needing support. It then prioritizes the posts and sends more pressing ones to be reviewed first. Snapchat, too, has recently unveiled AI image-recognition technology in its latest update. It recognises objects in pictures and then offers image-recognition filters which are tailor made to match the objects in the picture. For example, if you take a picture with food, Snapchat will offer filters with words like, ‘get in my belly’ and ‘eatin’ good’. This is not the first time that the company has incorporated object recognition in its app. Snapchat already allows you to search for certain objects, places and events in ‘stories’. For example, if you search for ‘beach’, you will get snaps of people at beaches, and if you search for ‘football’ you will find snaps of people at football games. This is just the beginning of AI being incorporated into social media and eventually all aspects of our daily lives. Sources: CNN tech Facebook newsroom Flipboard/AI Business Insider |

RSS Feed

RSS Feed